Written by: Murilo Machado, DevSecOps Lead - spriteCloud, 2026

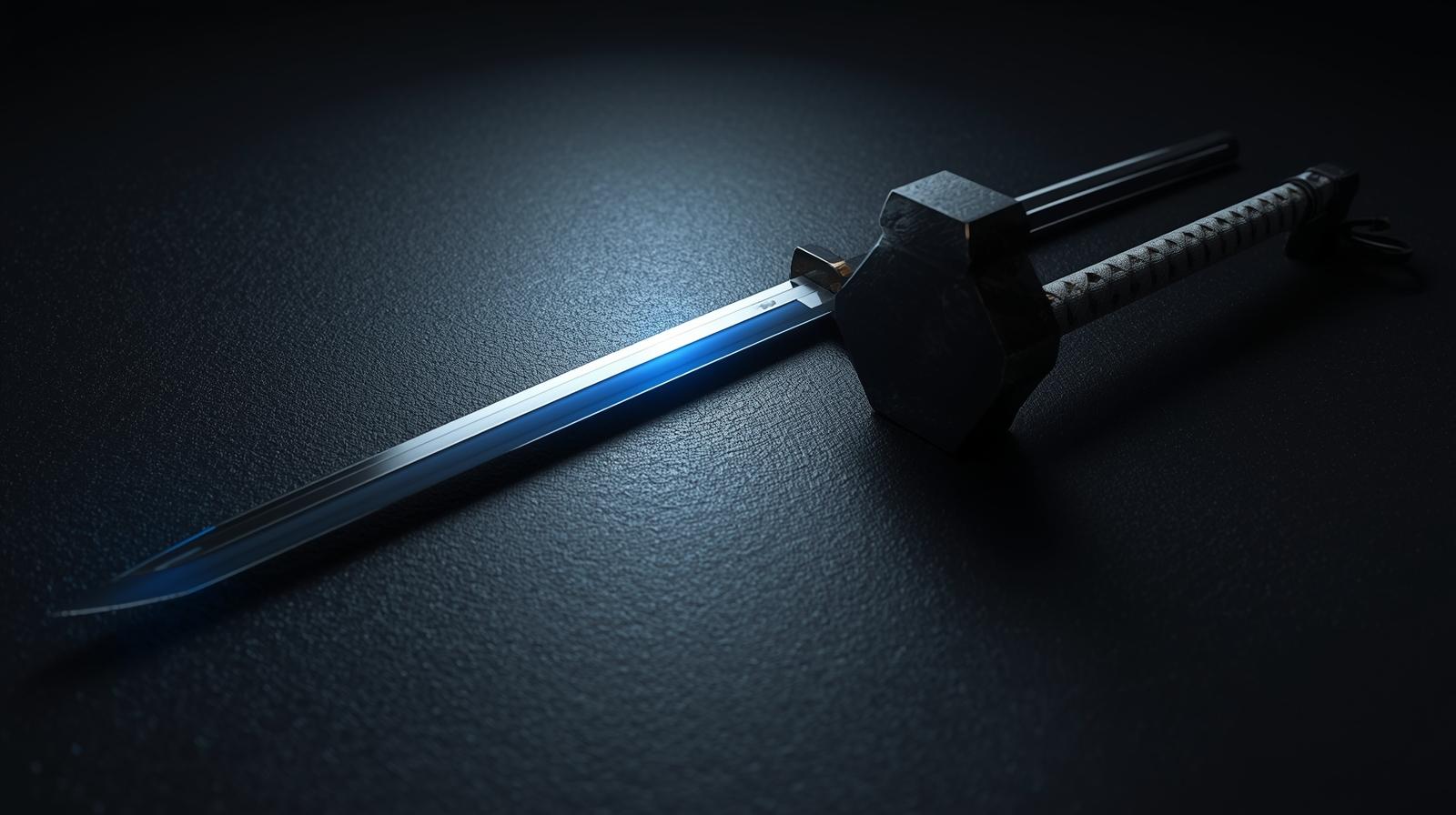

A few years ago, we published an article asking a critical question: Think like a tester: Can we trust AI-driven test case generation yet? At the time, we compared AI testing tools to a newly forged weapon. We wondered if they were a perfectly crafted katana ready to slice through feature production, or just a blunt hammer slamming blindly against every scenario.

We noted the incredible theoretical advantages, such as reduced manual effort and increased coverage, but we also highlighted significant pitfalls. AI simply did not "think like a tester." It lacked imagination, empathy, and the intuition required to find the truly dangerous edge cases.

Fast forward to today, and the landscape has evolved dramatically. The AI of 2026 is no longer a blunt hammer. But because the tools have become so incredibly fast and efficient, a dangerous new misconception has taken root in boardrooms and development teams: "If the AI writes the tests, we don’t need deep QA expertise anymore."

Do not get it wrong. AI is an exceptional tool, but you can only do as much as you are aware of. If you think that AI will automatically fill your knowledge gaps or that a lack of foundational QA understanding won't hold you back, you are walking into a trap. In fact, wielding modern AI effectively requires more foundational QA knowledge, not less.

The 2026 Reality: The "Third Wave" of AI Testing

To understand why foundational QA knowledge is non-negotiable today, we have to look at what AI testing tools are actually doing. We have moved past simple generative AI (where a tool just writes a Selenium script for you) and entered the "Third Wave" of test automation.

Here is what the modern testing battlefield actually looks like:

- Agentic Workflows (The Rise of QA Agents): Platforms now feature fully autonomous agents. Instead of giving them step-by-step instructions, you give them a goal (e.g., "Ensure the checkout process never charges a user twice"). The AI agent explores the application, designs the test paths, and executes them autonomously.

- From "Self-Healing" to "Self-Explaining": A few years ago, AI tools would silently fix a broken UI locator. Today, AI provides a failure narrative. It analyses logs, network traces, and DOM diffs to tell you exactly why a test broke, categorising it as an app bug, an environment issue, or testing debt.

- Synthetic Data Generation: AI now instantly generates complex, privacy-safe, and highly contextual test data (like compliant healthcare records or diverse financial transactions) without risking PII leaks.

Because AI now handles the heavy lifting of agentic exploration and data generation, teams spend drastically less time on manual, hands-on keyboard execution. But this speed comes with a massive caveat.

The Multiplier Effect: Garbage In, Garbage Out at Scale

AI is a multiplier, not a substitute. It makes testing faster, but if your team lacks an understanding of core Quality Assurance principles, handing them an AI tool is like giving a sports car to someone who doesn't know how to drive. You will go very fast, but you will inevitably crash.

As we noted in our previous article, AI lacks human imagination. We used the example of an AI testing a video game: it might successfully test running, driving, and shooting, but it lacks the human intuition to place a character on the train tracks just to see what happens when a train hits them.

This remains true. AI is incredibly obedient. If a junior developer without QA experience prompts an AI agent to generate tests for a banking login page, the AI will happily oblige. But without human knowledge of boundary value analysis, equivalence partitioning, or risk-based testing, the AI might generate 50 redundant "happy path" tests. It will completely miss the critical edge cases, like simultaneous logins from different IP addresses, giving your stakeholders a false sense of security.

Faster execution of bad tests just creates a mountain of automated technical debt.

The New QA Mandate: Orchestrator Over Executor

When the AI acts as the hands-on executor, the QA professional’s role shifts fundamentally. They must step up as the Orchestrator, Architect, and Evaluator. This shift means that the team using the AI must show deep expertise in the department they are working in.

- You must define the exact Test Intent: AI can generate 1,000 test cases in a minute, but QA teams must focus on defining the intent and strict oracles (expected outcomes). If you do not understand equivalence classes, you cannot properly instruct the AI agent on what edge cases to hunt for.

- You must evaluate the AI's output: In 2026, reviewing the AI's automated code requires more expertise than writing it from scratch. You must be able to spot logical fallacies, security gaps, and inefficiencies to ensure the AI isn't just hallucinating a pass.

- You must provide the business context: AI understands the DOM and the API endpoints, but it does not understand your business reality. It does not know that a microsecond delay in a trading module is critical, while a visual glitch in the footer is low-priority. Human testers must set the strategy.

Conclusion: Trust the AI, But Steer with Expertise

We can finally answer the question we posed a couple of years ago: Yes, we can trust AI-driven test case generation today. The technology has matured into an invaluable asset that speeds up delivery and unburdens teams from repetitive manual tasks.

However, AI will not teach you how to be a good tester; it will only amplify the testing skills you already possess. You can only utilise AI to the extent of your own awareness. AI is a powerful engine, but human QA knowledge is the steering wheel. To avoid bloated test suites, missed edge cases, and a false sense of security, your team must be rooted in QA excellence.